Whole Stack IntegrationsMore than just tags. A truly unified customer experience requires integration across your entire marketing technology stack - including analytics platforms, customer data management solutions, and customer experience channels. Explore our vendor integrations and join us for a demo to see them in action.Become A Technology PartnerTake your platform to the next level by offering a more complete solution, fully integrated with the Tealium UDH. All integrated partners are highlighted in our tag and connector marketplaces, and have turn-key activation within the UDH.

.Written in,WebsiteApache Kafka is an software platform developed by and donated to the, written in. The project aims to provide a unified, high-throughput, low-latency platform for handling real-time data feeds. Its storage layer is essentially a 'massively scalable pub/sub message queue designed as a distributed transaction log,' making it highly valuable for enterprise infrastructures to process streaming data. Kafka can also connect to external systems (for data import/export) via Kafka Connect and provides Kafka Streams, a Java stream processing.The design is heavily influenced.

Contents.History Apache Kafka was originally developed by, and was subsequently open sourced in early 2011. Graduation from the occurred on 23 October 2012. In 2014, Jun Rao, Jay Kreps, and Neha Narkhede, who had worked on Kafka at LinkedIn, created a new company named Confluent with a focus on Kafka. According to a Quora post from 2014, Kreps chose to name the software after the author because it is 'a system optimized for writing', and he liked Kafka's work. Applications Apache Kafka is based on the, and it allows users to subscribe to it and publish data to any number of systems or real-time applications. Example applications include managing passenger and driver matching at, providing real-time analytics and for ’ smart home, and performing numerous real-time services across all of LinkedIn.

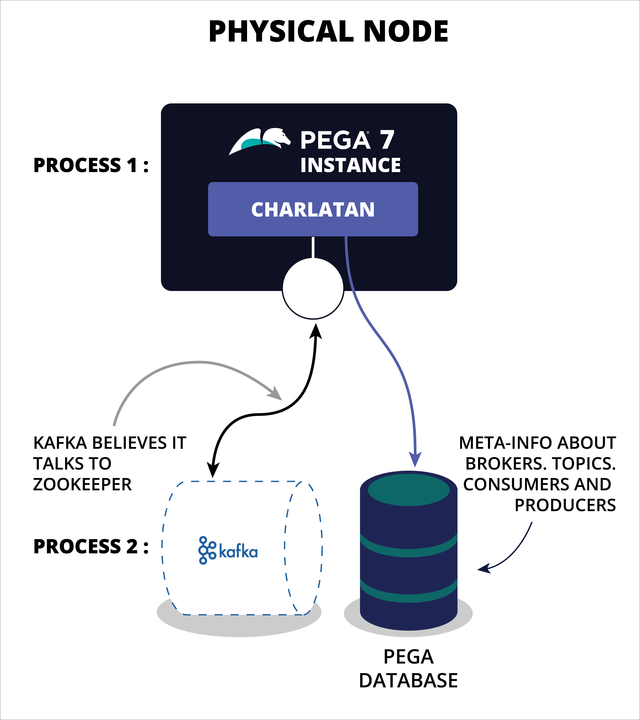

Apr 15, 2015 Applications generated more and more data than ever before and a huge part of the challenge - before it can even be analyzed - is accommodating the load in the first place. Apache’s Kafka meets this challenge. It was originally designed by LinkedIn and subsequently open-sourced in 2011. The project aims to provide a unified, high-throughput, low-latency platform for handling real-time data. Implemented KAFKA integration in Pega to both consume and publish messages. During my assignments, I have extensively used tools PRPC (V6.2), (V7.1), (V7.2) & (V7.3). Worked on DSM (Next best action) concepts and Interactions. Worked on CLM KYC framework implementations. Worked extensively in Pega case management solutions.

Apache Kafka architecture. Overview of KafkaKafka stores key-value messages that come from arbitrarily many processes called producers. The data can be partitioned into different 'partitions' within different 'topics'.

Within a partition, messages are strictly ordered by their offsets (the position of a message within a partition), and indexed and stored together with a timestamp. Other processes called 'consumers' can read messages from partitions. For stream processing, Kafka offers the Streams API that allows writing Java applications that consume data from Kafka and write results back to Kafka. Apache Kafka also works with external stream processing systems such as, and.Kafka runs on a cluster of one or more servers (called brokers), and the partitions of all topics are distributed across the cluster nodes. Additionally, partitions are replicated to multiple brokers. This architecture allows Kafka to deliver massive streams of messages in a fault-tolerant fashion and has allowed it to replace some of the conventional messaging systems like (JMS), (AMQP), etc. Since the 0.11.0.0 release, Kafka offers transactional writes, which provide exactly-once stream processing using the Streams API.Kafka supports two types of topics: Regular and compacted.

Regular topics can be configured with a retention time or a space bound. If there are records that are older than the specified retention time or if the space bound is exceeded for a partition, Kafka is allowed to delete old data to free storage space. By default, topics are configured with a retention time of 7 days, but it's also possible to store data indefinitely. For compacted topics, records don't expire based on time or space bounds. Instead, Kafka treats later messages as updates to older message with the same key and guarantees never to delete the latest message per key.